Merge Sort Time Complexity

Suppose (for simplicity) that n = 2k for some entire k. Let T(n)the time used to sort n elements. As we can perform separation andmerging in linear time, it takes cn time to perform these twosteps, for some constant c. So, T(n) = 2T(n/2) + cn.

In terms of moves, merge sort's worst case complexity is O (n log n)—the same complexity as quicksort's best case, and merge sort's best case takes about half as many iterations as the worst case. citation needed. Merge K sorted arrays of different sizes ( Divide and Conquer Approach ). Time Complexities of all Sorting Algorithms. Sort n numbers in range from 0 to n^2 - 1 in linear time; Time complexity of insertion sort when there are O(n) inversions? Can QuickSort be implemented in O(nLogn) worst case time complexity? Merge sort is an algorithm to sort an array. It is based on divide and conquer technique which sort an array in O(n logn) time. This is known as its worst-case time complexity. Merge sort first divide the whole array into equal halves and then combined them in a sorted manner. This is very simple algorithm.

In the same way:

T(n/2) = 2T(n/4) + cn/2, so

T(n) = 4T(n/4) + 2cn. Going in this way ... T(n) = 2mT(n/2m) +mcn, and

T(n) = 2kT(n/2k) + kcn = nT(1) + cnlog2n = O(n log n). Remember,as n=2k k = log2n! The general case requires a bit more work, butit takes O(n log n) time anyway.

How do you calculate time complexity for heap sort?

What is the time complexity for merge sort in best case?

The worst case for merge sort is O(n*log2n) The running time for merge sort is 2T(n/2)+O(n) I hope this will help... Jimmy Clinton Malusi (JKUAT-Main Campus)

Which one is the best and efficient sort?

Qucik sort or merge sort with time complexity O(n log n). In terms of both time and space Qucik sort is efficient.

What is the time complexity for merge sort in average case?

MergeSort has the same time-complexity in all cases, O(n log n). In fact, it has exactly the same absolute time, no matter what the state of the array.

Time complexity of merge sort?

The time-complexity of merge sort is O(n log n). At each level of recursion, the merge process is performed on the entire array. (Deeper levels work on shorter segments of the array, but these are called more times.) So each level of recursion is O(n). There are O(log n) levels of recursion, since the array is approximately halved each time. The best-case time-complexity is also O(n log n), so mergesort takes just as long no… Read More

What is time complexity of algorithim?

The time complexity of an algorithm is the length of time to complete the algorithm given certain inputs. Usually, the algorithm with the best average time will be selected for a task, unless it can be proven that a certain class of conditions has to exist for an average time, in which case an algorithm that is faster in certain cases will be chosen based on that characteristic. For example, given the option of a… Read More

Calculate the Time and Space complexity for the Algorithm to add 10 numbers?

The algorithm will have both a constant time complexity and a constant space complexity: O(1)

What is the average case time complexity of the quick sort algorithm?

The time complexity of q-sort algorithm for all cases: average-O(n log(n)) worst- O(n2)

What is the time complexity of radix sort?

If the range of numbers is 1....n and the size of numbers is k(small no.) then the time complexity will be theta n log..

Time complexity of insertion sort in worst case is?

Running time of merge sort?

Merge sort (or mergesort) is an algorithm. Algorithms do not have running times since running times are determined by the algorithm's performance/complexity, the programming language used to implement the algorithm and the hardware the implementation is executed upon. When we speak of algorithm running times we are actually referring to the algorithm's performance/complexity, which is typically notated using Big O notation. Mergesort has a worst, best and average case performance of O(n log n). The… Read More

How do you select pivot in quick sort?

quick sort has a best case time complexity of O(nlogn) and worst case time complexity of 0(n^2). the best case occurs when the pivot element choosen as the center or close to the center element of the list.the time complexity can be derived for this case as: t(n)=2*t(n/2)+n. whereas the worst case time complexity for quick sort happens when the pivot element is towards the end of the list.the time complexity for this can be… Read More

What is the average case time complexity of bubble sort?

Is bubble sort more efficient technique than insertion sort?

Bubble sort and insertion sort both have the same time complexity (and space complexity) in the best, worst, and average cases. However, these are purely theoretical comparisons. In practical real-world scenarios, insertion sort (or any other sort, for that matter) will almost always be the better choice over a bubble sort.

What is the best case time complexity of selection sort?

Selection sort has no end conditions built in, so it will always compare every element with every other element. This gives it a best-, worst-, and average-case complexity of O(n2).

What are the real time applications of merge sort?

Is selection sort and tournament sort are same?

No. Tournament sort is a variation of heapsort but is based upon a naive selection sort. Selection sort takes O(n) time to find the largest element and requires n passes, and thus has an average complexity of O(n*n). Tournament sort takes O(n) time to build a priority queue and thus reduces the search time to O(log n) for each selection, and therefore has an average complexity of O(n log n), the same as heapsort.

How do you calculate time and space complexity?

you can find an example in this link ww.computing.dcu.ie/~away/CA313/space.pdf good luck

What do you mean by time and space complexity of an algorithm?

what do you mean by time and space complexity and how to represent these complexity

What is are the time complexity or space complexity of DES algorithm?

time complexity is 2^57..and space complexity is 2^(n+1).

Why quick sort is called quick sort?

Although quick sort has a worst case time complexity of O(n^2), but for sorting a large amount of numbers, quick sort is very efficient because of the concept of locality of reference.

What is time complexity and space complexity?

Time complexity :: The amount of computer time the program needs to run it to completion. Space complexity :: The amount of memory it needs to run to completion.

Why you need to sort an array?

Merge Sort Time Complexity Explained

Because in any type of search the element can be found at the last position of your array so time complexity of the program is increased..so if array when sorted easily finds the element within less time complexity than before..

Why time complexity is better than actual running time?

Finding a time complexity for an algorithm is better than measuring the actual running time for a few reasons: # Time complexity is unaffected by outside factors; running time is determined as much by other running processes as by algorithm efficiency. # Time complexity describes how an algorithm will scale; running time can only describe how one particular set of inputs will cause the algorithm to perform. Note that there are downsides to time complexity… Read More

What is the time complexity of n queens problem?

What were the mayan calendars used for?

to calculate the days and time. sort of like our calendar toay

What is the worst case and best case time complexity of insertion sort?

Best case for insertion sort is O(n), where the array is already sorted. The worst case, where the array is completely reversed, is O(n*n).

What is the difference between polynomial and non polynomial time complexity?

What are the two main measures for the efficiency of an algorithm?

What is time complexity of binary search?

The best case complexity is O(1) i.e if the element to search is the middle element. The average and worst case time complexity are O(log n).

Can you merge multiple questions at once?

No, you cannot merge multiple questions at once. You can only merge multiple alternates at one time.

When does quick sort take more time than merg sort?

Quick sort runs the loop from the start to the end everytime it finds a large value or a small value while in merge sort starts from the first position of the array and assembles the large or small numbers in one side in just one loop so its more faster than quick sort

What is difference between time complexity and running time?

'Running Time' is essentially a synonym of 'Time Complexity', although the latter is the more technical term. 'Running Time' is confusing, since it sounds like it could mean 'the time something takes to run', whereas Time Complexity unambiguously refers to the relationship between the time and the size of the input.

What is the binary search tree worst case time complexity?

What is the worst case and best case time complexity of heapsort?

The best and worst case time complexity for heapsort is O(n log n).

What is the time complexity of Dijkstra's algorithm?

Dijkstra's original algorithm (published in 1959) has a time-complexity of O(N*N), where N is the number of nodes.

Which sorting method has best perfomance in terms of storage and time complexity?

Introspective sort (introsort) is the algorithm of choice, with a worst-case time-complexity of O(n log n) and space complexity of O(log n). Introsort is an adaptive (hybrid) algorithm that starts with a quicksort then degrades to heapsort. Pivot selection during quicksort is critical to best performance, however both quicksort and heapsort are unstable algorithms. If stability is required, timsort is a better option, with a time-complexity of O(n log n) and a space complexity of… Read More

What is the Big Oh Notation?

It is used to describe the time complexity of an algorithm, where O(1) is a constant time, O(n) is linear time, and O(n^2) is exponential time. Big Oh Notation reduces the time complexity to an approximation by defining the complexity as the largest parameter. For example, if an estimated time complexity is x^2+x*5+100, Big Oh Notation would state that the time complexity is O(n^2). This is because, as x increases, the x*5 and 100 will… Read More

What is time complexity of an algorithm?

Time complexity is a function which value depend on the input and algorithm of a program and give us idea about how long it would take to execute the program

The time complexity of the sequential search algorithm is?

O(N) where N is the number of elements in the array you are searching.So it has linear complexity.

What would be appropriate measures of cost to use as a basis for comparing the two sorting algorithms?

How would you go about comparing two proposed algorithms for sorting an array of integer?

This is a broad question. I am assuming you are wanting to compare the speed of each algorithm and there are two ways to measure this: 1. Run both algorithms on the same machine and see how the time compares for different input sizes e.g. start with 100,1000,100,000 etc. 2. The more useful measure would be to attain it's asymptotic run time complexity. This is a mathematical technique that doesn't care about the machines an… Read More

What is the time complexity of an algorithm?

Time complexity gives an indication of the time an algorithm will complete its task. However, it is merely an indication; two algorithms with the same time complexity won't necessarily take the same amount of time to complete. For instance, comparing two primitive values is a constant-time operation. Swapping those values is also a constant-time operation, however a swap requires more individual operations than a comparison does, so a swap will take longer even though the… Read More

Case complexity in data structure algorithms?

The complexity of an algorithm is the function which gives the running time and/or space in terms of the input size.

What is the worst case and average case complexity of bubblesort?

Consider sorting an array of elements in ascending order using bubble sort. Bubble sort bubbles the greatest element to the last position in its first iteration, the second largest element to the second last position in its second iteration and so on. Due to this technique, the following cases occur: 1) Best case: This occurs when the array is already sorted in ascending order. In such situation, the bubble sort algorithm simply scans through the… Read More

What do you understand by complexity of an algorithm?

The complexity of an algorithm tells you how well the algorithm will scale when applied to different sized problem sets. Time complexity will allow you to estimate how much longer it will take. Space complexity will allow you to estimate how much more storage space will be required.

What is time complexity in data structure?

The complexity of an algorithm is a function describing the efficiency of the algorithm in terms of the amount of data the algorithm must process

What is the Time complexity of transpose of a matrix?

Transposing a matrix is O(n*m) where m and n are the number of rows and columns. For an n-row square matrix, this would be quadratic time-complexity.

What do black holes form?

Black holes are sort of the final stage of stellar evolution; they don't form much else. Two black holes may merge to form a larger one, and after a very, very long time, they will evaporate.

Compare the time complexity for stack and queue?

An example of merge sort. First divide the list into the smallest unit (1 element), then compare each element with the adjacent list to sort and merge the two adjacent lists. Finally all the elements are sorted and merged. | |

| Class | Sorting algorithm |

|---|---|

| Data structure | Array |

| Worst-case performance | O(n log n) |

| Best-case performance | O(n log n) typical,O(n) natural variant |

| Average performance | O(n log n) |

| Worst-case space complexity | О(n) total with O(n) auxiliary, O(1) auxiliary with linked lists[1] |

In computer science, merge sort (also commonly spelled mergesort) is an efficient, general-purpose, comparison-basedsorting algorithm. Most implementations produce a stable sort, which means that the order of equal elements is the same in the input and output. Merge sort is a divide and conquer algorithm that was invented by John von Neumann in 1945.[2] A detailed description and analysis of bottom-up mergesort appeared in a report by Goldstine and von Neumann as early as 1948.[3]

- 1Algorithm

Algorithm[edit]

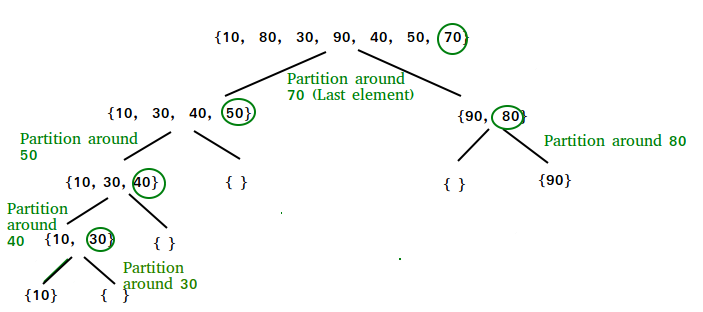

Conceptually, a merge sort works as follows:

- Divide the unsorted list into n sublists, each containing one element (a list of one element is considered sorted).

- Repeatedly merge sublists to produce new sorted sublists until there is only one sublist remaining. This will be the sorted list.

Top-down implementation[edit]

Example C-like code using indices for top-down merge sort algorithm that recursively splits the list (called runs in this example) into sublists until sublist size is 1, then merges those sublists to produce a sorted list. The copy back step is avoided with alternating the direction of the merge with each level of recursion.

Bottom-up implementation[edit]

Example C-like code using indices for bottom-up merge sort algorithm which treats the list as an array of n sublists (called runs in this example) of size 1, and iteratively merges sub-lists back and forth between two buffers:

Top-down implementation using lists[edit]

Pseudocode for top-down merge sort algorithm which recursively divides the input list into smaller sublists until the sublists are trivially sorted, and then merges the sublists while returning up the call chain.

In this example, the merge function merges the left and right sublists.

Bottom-up implementation using lists[edit]

Pseudocode for bottom-up merge sort algorithm which uses a small fixed size array of references to nodes, where array[i] is either a reference to a list of size 2i or nil. node is a reference or pointer to a node. The merge() function would be similar to the one shown in the top-down merge lists example, it merges two already sorted lists, and handles empty lists. In this case, merge() would use node for its input parameters and return value.

Natural merge sort[edit]

A natural merge sort is similar to a bottom-up merge sort except that any naturally occurring runs (sorted sequences) in the input are exploited. Both monotonic and bitonic (alternating up/down) runs may be exploited, with lists (or equivalently tapes or files) being convenient data structures (used as FIFO queues or LIFO stacks).[4] In the bottom-up merge sort, the starting point assumes each run is one item long. In practice, random input data will have many short runs that just happen to be sorted. In the typical case, the natural merge sort may not need as many passes because there are fewer runs to merge. In the best case, the input is already sorted (i.e., is one run), so the natural merge sort need only make one pass through the data. In many practical cases, long natural runs are present, and for that reason natural merge sort is exploited as the key component of Timsort. Example:

Tournament replacement selection sorts are used to gather the initial runs for external sorting algorithms.

Analysis[edit]

In sorting n objects, merge sort has an average and worst-case performance of O(n log n). If the running time of merge sort for a list of length n is T(n), then the recurrence T(n) = 2T(n/2) + n follows from the definition of the algorithm (apply the algorithm to two lists of half the size of the original list, and add the n steps taken to merge the resulting two lists). The closed form follows from the master theorem for divide-and-conquer recurrences.

In the worst case, the number of comparisons merge sort makes is given by the sorting numbers. These numbers are equal to or slightly smaller than (n ⌈lgn⌉ − 2⌈lg n⌉ + 1), which is between (n lg n − n + 1) and (n lg n + n + O(lg n)).[5]

For large n and a randomly ordered input list, merge sort's expected (average) number of comparisons approaches α·n fewer than the worst case where

In the worst case, merge sort does about 39% fewer comparisons than quicksort does in the average case. In terms of moves, merge sort's worst case complexity is O(n log n)—the same complexity as quicksort's best case, and merge sort's best case takes about half as many iterations as the worst case.[citation needed]

Merge sort is more efficient than quicksort for some types of lists if the data to be sorted can only be efficiently accessed sequentially, and is thus popular in languages such as Lisp, where sequentially accessed data structures are very common. Unlike some (efficient) implementations of quicksort, merge sort is a stable sort.

Merge sort's most common implementation does not sort in place;[6] therefore, the memory size of the input must be allocated for the sorted output to be stored in (see below for versions that need only n/2 extra spaces).

Variants[edit]

Variants of merge sort are primarily concerned with reducing the space complexity and the cost of copying.

A simple alternative for reducing the space overhead to n/2 is to maintain left and right as a combined structure, copy only the left part of m into temporary space, and to direct the merge routine to place the merged output into m. With this version it is better to allocate the temporary space outside the merge routine, so that only one allocation is needed. The excessive copying mentioned previously is also mitigated, since the last pair of lines before the return result statement (function merge in the pseudo code above) become superfluous.

One drawback of merge sort, when implemented on arrays, is its O(n) working memory requirement. Several in-place variants have been suggested:

- Katajainen et al. present an algorithm that requires a constant amount of working memory: enough storage space to hold one element of the input array, and additional space to hold O(1) pointers into the input array. They achieve an O(n log n) time bound with small constants, but their algorithm is not stable.[7]

- Several attempts have been made at producing an in-place merge algorithm that can be combined with a standard (top-down or bottom-up) merge sort to produce an in-place merge sort. In this case, the notion of 'in-place' can be relaxed to mean 'taking logarithmic stack space', because standard merge sort requires that amount of space for its own stack usage. It was shown by Geffert et al. that in-place, stable merging is possible in O(n log n) time using a constant amount of scratch space, but their algorithm is complicated and has high constant factors: merging arrays of length n and m can take 5n + 12m + o(m) moves.[8] This high constant factor and complicated in-place algorithm was made simpler and easier to understand. Bing-Chao Huang and Michael A. Langston[9] presented a straightforward linear time algorithm practical in-place merge to merge a sorted list using fixed amount of additional space. They both have used the work of Kronrod and others. It merges in linear time and constant extra space. The algorithm takes little more average time than standard merge sort algorithms, free to exploit O(n) temporary extra memory cells, by less than a factor of two. Though the algorithm is much faster in a practical way but it is unstable also for some lists. But using similar concepts, they have been able to solve this problem. Other in-place algorithms include SymMerge, which takes O((n + m) log (n + m)) time in total and is stable.[10] Plugging such an algorithm into merge sort increases its complexity to the non-linearithmic, but still quasilinear, O(n (log n)2).

- A modern stable linear and in-place merging is block merge sort.

Merge Sort Time Complexity Analysis Ppt

An alternative to reduce the copying into multiple lists is to associate a new field of information with each key (the elements in m are called keys). This field will be used to link the keys and any associated information together in a sorted list (a key and its related information is called a record). Then the merging of the sorted lists proceeds by changing the link values; no records need to be moved at all. A field which contains only a link will generally be smaller than an entire record so less space will also be used. This is a standard sorting technique, not restricted to merge sort.

Use with tape drives[edit]

An external merge sort is practical to run using disk or tape drives when the data to be sorted is too large to fit into memory. External sorting explains how merge sort is implemented with disk drives. A typical tape drive sort uses four tape drives. All I/O is sequential (except for rewinds at the end of each pass). A minimal implementation can get by with just two record buffers and a few program variables.

Naming the four tape drives as A, B, C, D, with the original data on A, and using only 2 record buffers, the algorithm is similar to Bottom-up implementation, using pairs of tape drives instead of arrays in memory. The basic algorithm can be described as follows:

- Merge pairs of records from A; writing two-record sublists alternately to C and D.

- Merge two-record sublists from C and D into four-record sublists; writing these alternately to A and B.

- Merge four-record sublists from A and B into eight-record sublists; writing these alternately to C and D

- Repeat until you have one list containing all the data, sorted—in log2(n) passes.

Instead of starting with very short runs, usually a hybrid algorithm is used, where the initial pass will read many records into memory, do an internal sort to create a long run, and then distribute those long runs onto the output set. The step avoids many early passes. For example, an internal sort of 1024 records will save nine passes. The internal sort is often large because it has such a benefit. In fact, there are techniques that can make the initial runs longer than the available internal memory.[11]

With some overhead, the above algorithm can be modified to use three tapes. O(n log n) running time can also be achieved using two queues, or a stack and a queue, or three stacks. In the other direction, using k > two tapes (and O(k) items in memory), we can reduce the number of tape operations in O(log k) times by using a k/2-way merge.

A more sophisticated merge sort that optimizes tape (and disk) drive usage is the polyphase merge sort.

Optimizing merge sort[edit]

On modern computers, locality of reference can be of paramount importance in software optimization, because multilevel memory hierarchies are used. Cache-aware versions of the merge sort algorithm, whose operations have been specifically chosen to minimize the movement of pages in and out of a machine's memory cache, have been proposed. For example, the tiled merge sort algorithm stops partitioning subarrays when subarrays of size S are reached, where S is the number of data items fitting into a CPU's cache. Each of these subarrays is sorted with an in-place sorting algorithm such as insertion sort, to discourage memory swaps, and normal merge sort is then completed in the standard recursive fashion. This algorithm has demonstrated better performance[example needed] on machines that benefit from cache optimization. (LaMarca & Ladner 1997)

Kronrod (1969) suggested an alternative version of merge sort that uses constant additional space. This algorithm was later refined. (Katajainen, Pasanen & Teuhola 1996)

Also, many applications of external sorting use a form of merge sorting where the input get split up to a higher number of sublists, ideally to a number for which merging them still makes the currently processed set of pages fit into main memory.

Parallel merge sort[edit]

Merge sort parallelizes well due to use of the divide-and-conquer method. Several parallel variants are discussed in the third edition of Cormen, Leiserson, Rivest, and Stein's Introduction to Algorithms.[12] The first of these can be very easily expressed in a pseudocode with fork and join keywords:

This algorithm is a trivial modification from the serial version, and its speedup is not impressive: when executed on an infinite number of processors, it runs in Θ(n) time, which is only a Θ(log n) improvement on the serial version. A better result can be obtained by using a parallelized merge algorithm, which gives parallelism Θ(n / (log n)2), meaning that this type of parallel merge sort runs in

time if enough processors are available.[12] Such a sort can perform well in practice when combined with a fast stable sequential sort, such as insertion sort, and a fast sequential merge as a base case for merging small arrays.[13]

Merge sort was one of the first sorting algorithms where optimal speed up was achieved, with Richard Cole using a clever subsampling algorithm to ensure O(1) merge.[14] Other sophisticated parallel sorting algorithms can achieve the same or better time bounds with a lower constant. For example, in 1991 David Powers described a parallelized quicksort (and a related radix sort) that can operate in O(log n) time on a CRCWparallel random-access machine (PRAM) with n processors by performing partitioning implicitly.[15] Powers[16] further shows that a pipelined version of Batcher's Bitonic Mergesort at O((log n)2) time on a butterfly sorting network is in practice actually faster than his O(log n) sorts on a PRAM, and he provides detailed discussion of the hidden overheads in comparison, radix and parallel sorting.

Comparison with other sort algorithms[edit]

Although heapsort has the same time bounds as merge sort, it requires only Θ(1) auxiliary space instead of merge sort's Θ(n). On typical modern architectures, efficient quicksort implementations generally outperform mergesort for sorting RAM-based arrays.[citation needed] On the other hand, merge sort is a stable sort and is more efficient at handling slow-to-access sequential media. Merge sort is often the best choice for sorting a linked list: in this situation it is relatively easy to implement a merge sort in such a way that it requires only Θ(1) extra space, and the slow random-access performance of a linked list makes some other algorithms (such as quicksort) perform poorly, and others (such as heapsort) completely impossible.

As of Perl 5.8, merge sort is its default sorting algorithm (it was quicksort in previous versions of Perl). In Java, the Arrays.sort() methods use merge sort or a tuned quicksort depending on the datatypes and for implementation efficiency switch to insertion sort when fewer than seven array elements are being sorted.[17] The Linux kernel uses merge sort for its linked lists.[18]Python uses Timsort, another tuned hybrid of merge sort and insertion sort, that has become the standard sort algorithm in Java SE 7 (for arrays of non-primitive types),[19] on the Android platform,[20] and in GNU Octave.[21]

Notes[edit]

- ^Skiena (2008, p. 122)

- ^Knuth (1998, p. 158)

- ^Katajainen, Jyrki; Träff, Jesper Larsson (March 1997). 'A meticulous analysis of mergesort programs'(PDF). Proceedings of the 3rd Italian Conference on Algorithms and Complexity. Italian Conference on Algorithms and Complexity. Rome. pp. 217–228. CiteSeerX10.1.1.86.3154. doi:10.1007/3-540-62592-5_74.

- ^Powers, David M. W. and McMahon Graham B. (1983), 'A compendium of interesting prolog programs', DCS Technical Report 8313, Department of Computer Science, University of New South Wales.

- ^The worst case number given here does not agree with that given in Knuth's Art of Computer Programming, Vol 3. The discrepancy is due to Knuth analyzing a variant implementation of merge sort that is slightly sub-optimal

- ^Cormen; Leiserson; Rivest; Stein. Introduction to Algorithms. p. 151. ISBN978-0-262-03384-8.

- ^Katajainen, Jyrki; Pasanen, Tomi; Teuhola, Jukka (1996). 'Practical in-place mergesort'. Nordic J. Computing. 3 (1): 27–40. CiteSeerX10.1.1.22.8523.

- ^Geffert, Viliam; Katajainen, Jyrki; Pasanen, Tomi (2000). 'Asymptotically efficient in-place merging'. Theoretical Computer Science. 237: 159–181. doi:10.1016/S0304-3975(98)00162-5.

- ^Huang, Bing-Chao; Langston, Michael A. (March 1988). 'Practical In-Place Merging'. Communications of the ACM. 31 (3): 348–352. doi:10.1145/42392.42403.

- ^Kim, Pok-Son; Kutzner, Arne (2004). Stable Minimum Storage Merging by Symmetric Comparisons. European Symp. Algorithms. Lecture Notes in Computer Science. 3221. pp. 714–723. CiteSeerX10.1.1.102.4612. doi:10.1007/978-3-540-30140-0_63. ISBN978-3-540-23025-0.

- ^Selection sort. Knuth's snowplow. Natural merge.

- ^ abCormen et al. 2009, pp. 797–805

- ^Victor J. Duvanenko 'Parallel Merge Sort' Dr. Dobb's Journal & blog[1] and GitHub repo C++ implementation [2]

- ^Cole, Richard (August 1988). 'Parallel merge sort'. SIAM J. Comput. 17 (4): 770–785. CiteSeerX10.1.1.464.7118. doi:10.1137/0217049

- ^Powers, David M. W. Parallelized Quicksort and Radixsort with Optimal Speedup, Proceedings of International Conference on Parallel Computing Technologies. Novosibirsk. 1991.

- ^David M. W. Powers, Parallel Unification: Practical Complexity, Australasian Computer Architecture Workshop, Flinders University, January 1995

- ^OpenJDK src/java.base/share/classes/java/util/Arrays.java @ 53904:9c3fe09f69bc

- ^linux kernel /lib/list_sort.c

- ^jjb. 'Commit 6804124: Replace 'modified mergesort' in java.util.Arrays.sort with timsort'. Java Development Kit 7 Hg repo. Archived from the original on 2018-01-26. Retrieved 24 Feb 2011.

- ^'Class: java.util.TimSort<T>'. Android JDK Documentation. Archived from the original on January 20, 2015. Retrieved 19 Jan 2015.

- ^'liboctave/util/oct-sort.cc'. Mercurial repository of Octave source code. Lines 23-25 of the initial comment block. Retrieved 18 Feb 2013.

Code stolen in large part from Python's, listobject.c, which itself had no license header. However, thanks to Tim Peters for the parts of the code I ripped-off.

References[edit]

- Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L.; Stein, Clifford (2009) [1990]. Introduction to Algorithms (3rd ed.). MIT Press and McGraw-Hill. ISBN0-262-03384-4.

- Katajainen, Jyrki; Pasanen, Tomi; Teuhola, Jukka (1996). 'Practical in-place mergesort'. Nordic Journal of Computing. 3. pp. 27–40. ISSN1236-6064. Archived from the original on 2011-08-07. Retrieved 2009-04-04.. Also Practical In-Place Mergesort. Also [3]

- Knuth, Donald (1998). 'Section 5.2.4: Sorting by Merging'. Sorting and Searching. The Art of Computer Programming. 3 (2nd ed.). Addison-Wesley. pp. 158–168. ISBN0-201-89685-0.

- Kronrod, M. A. (1969). 'Optimal ordering algorithm without operational field'. Soviet Mathematics - Doklady. 10. p. 744.

- LaMarca, A.; Ladner, R. E. (1997). 'The influence of caches on the performance of sorting'. Proc. 8th Ann. ACM-SIAM Symp. on Discrete Algorithms (SODA97): 370–379. CiteSeerX10.1.1.31.1153.

- Skiena, Steven S. (2008). '4.5: Mergesort: Sorting by Divide-and-Conquer'. The Algorithm Design Manual (2nd ed.). Springer. pp. 120–125. ISBN978-1-84800-069-8.

- Sun Microsystems. 'Arrays API (Java SE 6)'. Retrieved 2007-11-19.

- Oracle Corp. 'Arrays (Java SE 10 & JDK 10)'. Retrieved 2018-07-23.

External links[edit]

| The Wikibook Algorithm implementation has a page on the topic of: Merge sort |

- Animated Sorting Algorithms: Merge Sort at the Wayback Machine (archived 6 March 2015) – graphical demonstration